Keynote 1

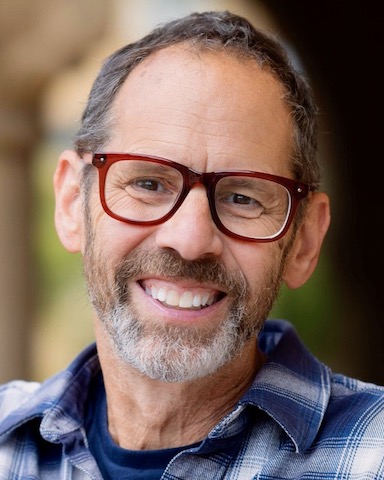

Dan Jurafsky

Dan Jurafsky is Professor of Linguistics, Professor of Computer Science , and Reynolds Professor in Humanities at Stanford University. He is an award-winning teacher, a MacArthur Fellow, the recipient of the Richard C. Atkinson Prize in Psychological and Cognitive Sciences from the National Academy of Sciences, a member of the American Academy of Arts and Sciences, and a Fellow of the Association for Computational Linguistics, the American Association for the Advancement of Science, and the Linguistics Society of America. Together with his students and other colleagues, he studies and teaches about natural language processing and large language models and their applications to the cognitive, linguistic, and social sciences and to social good. His books include the widely used co-authored online textbook “Speech and Language Processing” and the 2014 international bestseller and James Beard Award-nominee, “The Language of Food”.

Abstract

The Social Failures of Language Models as Conversational Partners

Language models are increasingly used in conversation for information, advice, and emotional support. In this talk I’ll summarize studies in our lab showing that models fail in systematic ways as social interlocutors. We find that language models are socially sycophantic, linguistically overconfident, overly anthropomorphic, and epistemically self-centered. We then show that these flaws have real consequences for users: people interacting with models suffer consequences including overreliance, distorted judgment, and reduced personal responsibility. I’ll discuss datasets and metrics, explore mitigations, and call for design, evaluation, and accountability mechanisms to protect user well-being.

Keynote 2

NANCY F. CHEN

Dr. Nancy F. Chen is an ISCA Fellow (2025), AAIA Fellow (2025) and inaugural A*STAR Fellow (2023). She serves as Multimodal Generative AI Group Leader, Deputy Head (Research) for the Aural and Language Intelligence Department, AI for Education Program Head at I2R (Institute for Infocomm Research), and Principal Investigator at CFAR (Centre for Frontier AI Research) at A*STAR. Her research advances multimodal, multilingual large language models for agentic reasoning, inclusive and trustworthy AI, efficient modeling, AI-augmented learning, and AI governance. AI innovations from her lab have led to commercial spin-offs and been deployed at Singapore’s Ministry of Education. Dr. Chen has won 10 Best Paper Awards and 10 Professional Awards; delivered over 40 international keynotes and distinguished lectures; participated in 10 expert panels; and co-organized 20 international conferences, including serving as Program Chairs for NeurIPS 2025 and ICLR 2023. Dr. Chen serves on the Advisory Board for IJCAI-ECAI (2026), Board of Governors for APSIPA (2024-2026), IEEE SPS Distinguished Lecturer (2023), and Board Member of ISCA (2021-2024). She won 2025 Asia Women Tech Award and honored as Singapore 100 Women in Tech (2021). Her work has received broad media coverage, featured in over seven news and media outlets across English, Chinese, and Tamil. Dr Chen has long advised government and industry on AI and emerging technologies, beginning at MIT Lincoln Laboratory during her PhD at MIT and Harvard.

Abstract

Language resources are the backbone of AI: they train models, ground linguistic analysis, and benchmark technological progress. Their evolution mirrors—and shapes—the trajectory of computational linguistics, natural language processing, speech technology, and artificial intelligence. This talk traces the long arc of language resources across successive eras—from curated linguistic annotations, to large-scale datasets enabling statistical learning, to representation learning and multimodal pretraining, and now toward alignment, where data shapes not only what models learn but also how they behave in society.

Across this arc, language resources have served both as the fuel for modeling and as reflections of the scientific priorities and institutional forces of their time—from early academic initiatives to coordinated infrastructures such as DARPA, Linguistic Data Consortium, European Language Resources Association, and O-COCOSDA, and more recently to industry-driven AI development. As machine learning techniques scale, boundaries across languages, modalities, cultures, and functionalities are increasingly blurred, bringing speech, text, and multimodal interaction into a shared modeling space. Yet this technological convergence also surfaces deeper societal questions around cultural representation, evaluation, and responsible deployment.

We illustrate these developments through examples spanning multicultural alignment, evaluation frameworks, and real-world conversational AI deployment. These include digital services by MERaLiON (Multimodal Empathetic Reasoning in One Network), the first multimodal large language model for Southeast Asia; SingaKids AI Tutor, a multilingual multimodal agent that supports children learning Malay, Mandarin, and Tamil; and Siu Dai, a telebot agent that assists chronic disease patients in navigating lifestyle management.

Ultimately, language resources do more than fuel models—they ground AI systems, connecting machine learning to perception, human interaction, and the cultural and institutional realities in which language lives.